Microsoft Purview Pricing and Applications

Microsoft Purview Pricing and introduction of Purview Applications

The Microsoft Purview pricing page has been updated. Below I have listed most of the changes. The most important changes are the introduction of the Microsoft Purview Applications and the pricing of the Insights Generation. The standard level of 1 capacity unit of 2 GB metadata storage and 25 operations per sec has been increased to 10 GB.

Post has been updated on April 25th.

Microsoft Purview Data Map

The Microsoft Purview Data Map stores metadata, annotations and relationships associated with data assets in a searchable knowledge graph.

Data Map is billed across three types of activities:

- Data Map Population– examples include metadata & lineage extraction or classification based on metadata & content inspection.

- Data Map Enrichment– examples include use of resource sets to optimize storage of data lake assets, or aggregation of classifications to generate insights

- Data Map Consumption– examples include serving up search results or rendering lineage graph. This also includes the use of Apache Atlas API to build apps on Data Map.

Data Map Population

Automated Scanning, Ingestion & Classification

Data Map population is serverless and billed based on the duration of scans (includes metadata extraction and classification) and ingestion jobs. Automated scans using native connectors trigger both scan and ingestion jobs. Push based updates from a Microsoft Purview client (e.g., lineage push from Azure Data Factory or Azure Synapse Analytics) only trigger ingestion jobs.

| Price | |

| For Power BI online | Free for a limited time |

| For SQL Server on-prem | Free for a limited time |

| For other data sources | €0.582 per 1 vCore Hour |

Data Map Enrichment

Advanced Resource Set

Advanced Resource Set is a built-in feature of the Data Map used to optimize the storage and search of data assets associated with partitioned files in data lakes. Billing for processing the resource set data assets is serverless and based on the duration of the processing, which can vary based on the change in partitioned files and resource set profile configured. In the Management Center you have an option to toggle on or off.

Note: By default, the advanced resource set processing is run every 12 hours for all the systems configured for scanning with resource set toggle enabled.

| Price | |

| Advanced Resource Set | €0.194 per 1 vCore Hour |

Insights Generation

Insights Generation aggregates metadata and classifications in the raw Data map into enriched, executive-ready reports that can be visualized in the Data Estate Insights application and granular asset level information in business-friendly format that can be exported. Report visualization and export incurs charges from Insights Report Consumption in the Data Estate Insights application.

| Price | |

| Report Generation | €0.758 per 1 vCore Hour |

Insight Generation is new for me, currently it looks like around €70,00.

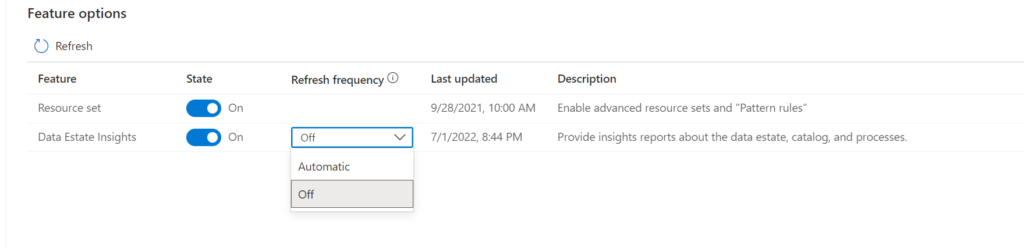

Note: By default, Insights Generation is enabled and provisioning and can be turned off in the Management center of Microsoft Purview governance portal. In the Management Center you have now an option to toggle on or off the Insight Generation. If the toggle is on and the report frequency is off than you can still see the reports with the latest report generation. If set to automatic your reports will refreshed based on your scanning and activities in de Portal. Currently the automatic refresh is weekly.

If the toggle is off the Insight Generation activity will you give you the following warning:

Data Map Consumption

Elastic Data Map

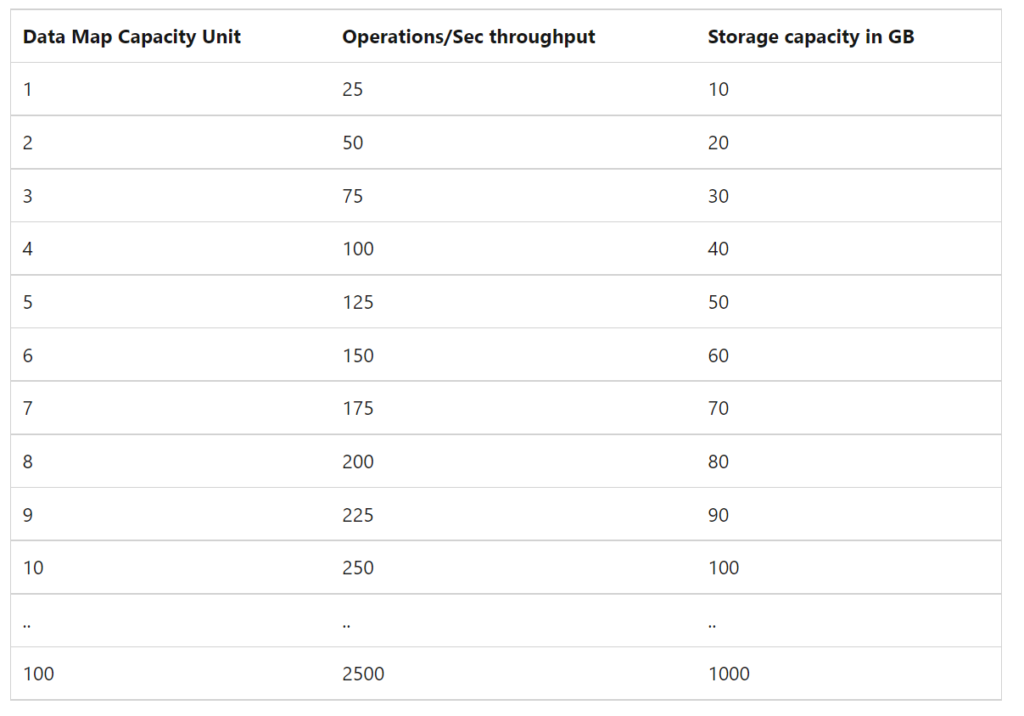

By default, a Microsoft Purview account is provisioned with a Data Map of at least 1 Capacity Unit. 1 Capacity Unit supports requests of up to 25 data map operations per second and includes storage of up to 10 GB of metadata about data assets.

| Price | |

| Capacity Unit | €0.380 per 1 vCore Hour |

Note: The storage size was until last week 2 GB for 1 capacity Unit and has been resized to 10 GB. so that is a major change.

Microsoft Purview Applications

Microsoft Purview Applications are replacing the C0, C1 and D0 options which we had previously. Microsoft Purview Applications are a set of independently adoptable, but highly integrated user experiences built on the Data Map including Data Catalog, Data Estate Insights and more. These applications are used by data consumers, producers, data stewards and officers that enable enterprises to ensure that data is easily discoverable, understood, high quality, and all use is per corporate and regulatory requirements.

Data Catalog

Data Catalog is an application built on Data Map for use by business users, data engineers and stewards to discover data, identify lineage relationships and assign business context quickly and easily.

| Price | |

| Search and browse of data assets | Included with the Data Map |

| Business Glossaries | Included with the Data Map |

| Lineage Visualization | Included with the Data Map |

| Self-Service Data Access | Free in preview |

Data Estate Insights

| Price | |

| Insights Consumption | €0.194 per API call |

Note: Insights consumption is billed per API call. One API call returns up to 10,000 rows of tabular result. Like Insight Generation I’ve no idea yet what this will do with the cost. As soon this is available I will update this article.

Data Access Policies for SQL and Data Lakes(preview)

Data owners can centrally manage thousands of SQL Servers and data lakes to enable quick and easy access to data assets mapped in the Data Map for performance monitors, auditors, and data users.

| Price | |

| SQL DevOps access | Free in preview |

| Data Lake data asset access | Free in preview |

Workflows(Preview)

Data owners and stewards can automate commonly used repetitive tasks associated with business processes like glossary curation and approval tracking using workflow management.

| Price | |

| Business Workflows | Free in preview |

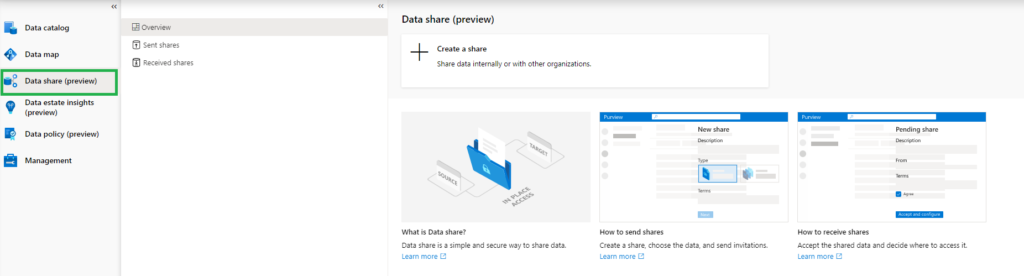

Data Sharing(Preview)

In-place Data Sharing lets users share data easily from within Microsoft Purview governance portal both within and between organizations, providing near real-time access to data without duplication.

| Price | |

| In place sharing for Azure Blob Storage and Azure Data Lake Storage (ADLS Gen2) storage accounts | Free |

More details on data sharing in Microsoft Purview can be found here.

Pricing Example

Based on the example which is published on the pricing page, I’ve done a Calculation:

Example Scenario:

Data Map can scale capacity elastically based on the request load. Request load is measured in terms of data map operations per second. As a cost control measure, a Data Map is configured by default to elastically scale up to a peak of 8 times the steady state capacity.

For dev/trial usage:

Data Map (Always on): average of 2 capacity unit x Price per capacity unit per hour x 730 hours per month

Scanning (Pay as you go): Total duration (in minutes) of all scans in a month / 60 min per hour x 32 vCore per scan x €0.582 per vCore per hour

Resource Set: Total duration (in hours) of processing resource set data assets in a month * Price per vCore per hour

The total cost per month for Azure Purview = cost of Data Map + cost of Scanning + cost of Resource Set

Assuming above Scenario that we only use 1 Capacity Unit and use not more then 10 GB of Metadata storage and we scan our data once a week for 2 hours.

Data Map 2 CU x €0.380 X 730 hours = €554

Scanning 4 scans x 4 hours x 32 VCore x €0.582 per vCore per hour = €297

Resource Set 30 days x every 12 hrs x 8 Vcore x €0.194 per vCore per hour €93

In Total €944 including 4 scans, Data Estate Insight excluded. If you leave Microsoft Purview as is and no scanning you base fee will be €277 for 1 CU and Resource Set toggle need to be switch off

Data Estate Insights every week(4) x 8 Vcore x 4 hours x €0.758 = €97

Like always, in case you have questions, leave them in the comments or send me a message.

Useful links

So if I’m not mistaken, the minimal cost of Purview is €280 per month, even for dev scenarios? (meaning I can’t even try it out with my free Azure credits)

Sorry Koen, but that’s true. I use Microsoft Purview in our company tenant. For dev/test You have to run it, do your test and then uninstall it again. Hopefully they change this in the future.

Hey Erwin, any insight into what number of capacity units are needed for a medium enterprise, e.g. about 100 consumers (SQL)? These units are hard to estimate without a trial and load test. That’s hard to do for a UI app. Thank you, Chuck.

Hi Chuck,

The CU are more based on how much sources you want to scan, the interval, how many classifications you assign.

I usually indicate between 800-1000 per month. Sources that do not change often do not have to be scanned every week, but can also be done monthly or even manually, which saves you quite a few units. The default is always 1 and scales where necessary depending on the amount of metadata stored. You always delete the instance if the costs are getting to high.

With best regards,

Erwin