This week the Azure Purview Product team added some new functionalities, new connectors(these connectors where added during my holiday), Azure Synapse Data Lineage, a better Power BI integration and the introduction of Elastics Data Map. Slowly we are on our way to a GA status, on September 2021, 28th there will be a Digital Event. Please find below some of announcements in detail.

New connectors in Azure Purview

Over the past period, the Azure Purview team has worked hard, they have already added the necessary new connectors such as ERWIN, Looker, Cassandra and Google Big Query.

This week it was time for some new functionalities.

Azure Synapse Analytics Data Lineage:

This functionality currently only works for a copy activity, but the first step has been made. Where for Lineage from Azure Data Factory you still had to make a link in Azure Purview, for the Lineage from Azure Synapse, it is the other way around. You create the link to Azure Purview in Azure Synapse. How to create this link I described this a couple of months ago in one of my post and can be found here.

Some known limitations on copy activity lineage based on the docs.

Currently, if you use the following copy activity features, the lineage is not yet supported:

- Copy data into Azure Data Lake Storage Gen1 using Binary format.

- Copy data into Azure Synapse Analytics using PolyBase or COPY statement.

- Compression setting for Binary, delimited text, Excel, JSON, and XML files.

- Source partition options for Azure SQL Database, Azure SQL Managed Instance, Azure Synapse Analytics, SQL Server, and SAP Table.

- Source partition discovery option for file-based stores.

- Copy data to file-based sink with setting of max rows per file.

- Add additional columns during copy.

In additional to lineage, the data asset schema (shown in Asset -> Schema tab) is reported for the following connectors:

- CSV and Parquet files on Azure Blob, Azure File Storage, ADLS Gen1, ADLS Gen2, and Amazon S3

- Azure Data Explorer, Azure SQL Database, Azure SQL Managed Instance, Azure Synapse Analytics, SQL Server, Teradata

Power BI

Power BI supports now automated discovery of columns, measures and datatypes of the Power BI.

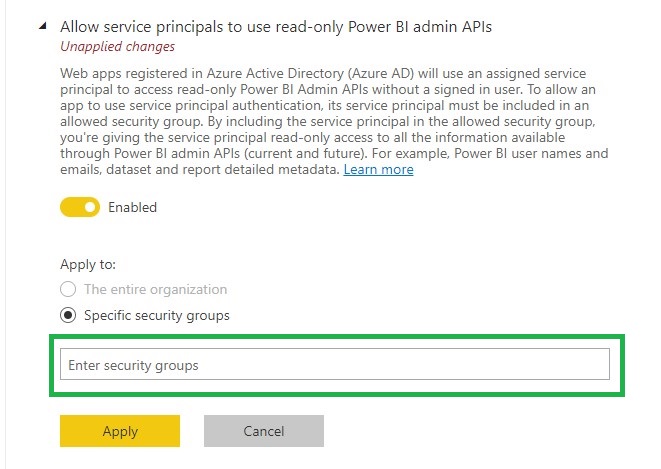

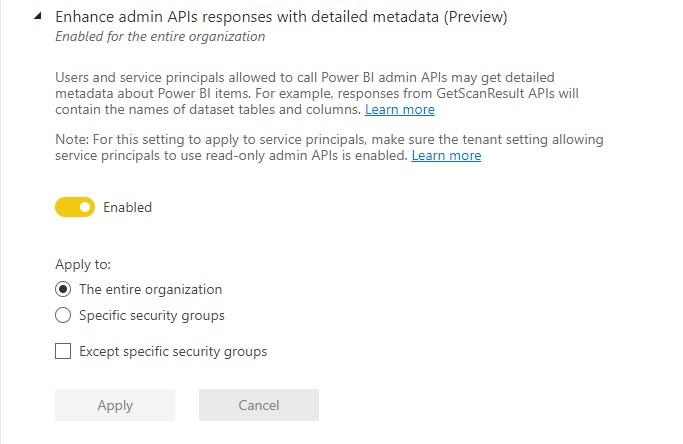

To enable this functionality you much enable the following settings in the Power BI tenant setting page(be aware that you need to be a Power BI Admin)

Allow service principals to use read-only Power BI admin APIs.

To use this setting create a Security group or use an existing one and add your Purview account to this SG.

Enhance admin APIs responses with detailed metadata

Elastic data map in Azure Purview

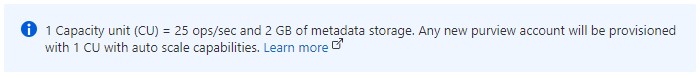

All Purview account created after August 2021, 18th are now created with the new Elastic data map concept. With this new concept your Purview account will come by default with one capacity unit and elastically grow based on usage. Each Data Map capacity unit includes a throughput of 25 operations/sec and 2 GB of metadata storage limit. So now when you’re not using Purview you’re not paying the default value of 4 capacity units.

The Data Map is billed on an hourly basis. You are billed for the maximum Data Map capacity unit needed within the hour. At times, you may need more operations/second within the hour, and this will increase the number of capacity units needed within that hour. At other times, your operations/second usage may be low, but you may still need a large volume of metadata storage. The metadata storage is what determines how many capacity units you need within the hour. Please read the documentation for a more detailed explanation and some examples

All existing Azure Purview accounts will be migrated in September/October to the Elastics data map concept.

The big question that remains open is what exactly does this Capacity Unit cost? For the time being during the Preview, it is still free, which can be read from the updated price page of Azure Purview..

More clarity about pricing and when Azure Purview goes to GA is likely to become clear during the event on September 28. You can register for this event via the link below.

EVENT=>Achieve unified data governance with Azure Purview

As always, in case you have any questions, please feel free to contact me.

Discover more from Erwin | Data & Intelligence

Subscribe to get the latest posts sent to your email.